AI companion privacy varies dramatically between platforms. Telegram-native bots like HoneyChat require only a Telegram ID with no email or social login. Web platforms like Character.AI, Replika, and Candy AI require email or Google/Apple accounts and deploy cookies, analytics, and advertising trackers. This article compares exactly what six platforms collect, store, and share.

I did something most people don’t: I actually read the privacy policies.

Not the summary pages with reassuring language about “protecting your data.” The actual legal documents — the Terms of Service, the Privacy Policy, the Cookie Policy, the Data Processing Agreements. For six platforms. Some of them are over 5,000 words long and written in dense legal English designed to be technically accurate while functionally opaque.

What I found isn’t scandalous — nobody’s secretly selling your love letters to advertisers. But the differences between platforms are real and significant, and they matter more with AI companions than almost any other type of software. Because people tell AI companions things they wouldn’t post on social media, text to friends, or even admit to a therapist. The intimate nature of these conversations makes privacy not just a feature, but a fundamental trust requirement.

Here’s what I found.

The data collection spectrum

Let me start with a concrete comparison of what each platform collects at signup and during use.

Data Collection Comparison — 2026

| HoneyChat | Character.AI | Replika | Candy AI | Nomi AI | SillyTavern | |

|---|---|---|---|---|---|---|

| Email required | No | Yes (Google/Apple) | Yes | Yes | Yes | No (local) |

| Real name | No | Via Google/Apple | Optional | Optional | Optional | No |

| Phone number | No (Telegram has it) | No | No | No | No | No |

| Payment data visible to app | No (Card/Stars/Crypto) | Via Apple/Google | Direct card | Direct card | Direct card | No (free) |

| Cookies | None (Telegram) | Analytics + ads | Analytics + ads | Analytics + FB Pixel | Analytics | None (local) |

| IP address logged | Telegram servers | Yes | Yes | Yes | Yes | No (local) |

| Device fingerprinting | No | Likely | Likely | Yes | Unknown | No |

| Chat data stored | Server (for memory) | Server | Server | Server | Server | Local only |

The spectrum runs from SillyTavern (collects literally nothing, because it runs on your own computer) to web platforms like Candy AI (email, cookies, Facebook Pixel, device data, and more). Telegram-native bots like HoneyChat sit in a middle ground — some server-side data storage is necessary for the service to work, but the identity layer is minimal.

Platform-by-platform breakdown

HoneyChat (Telegram-native)

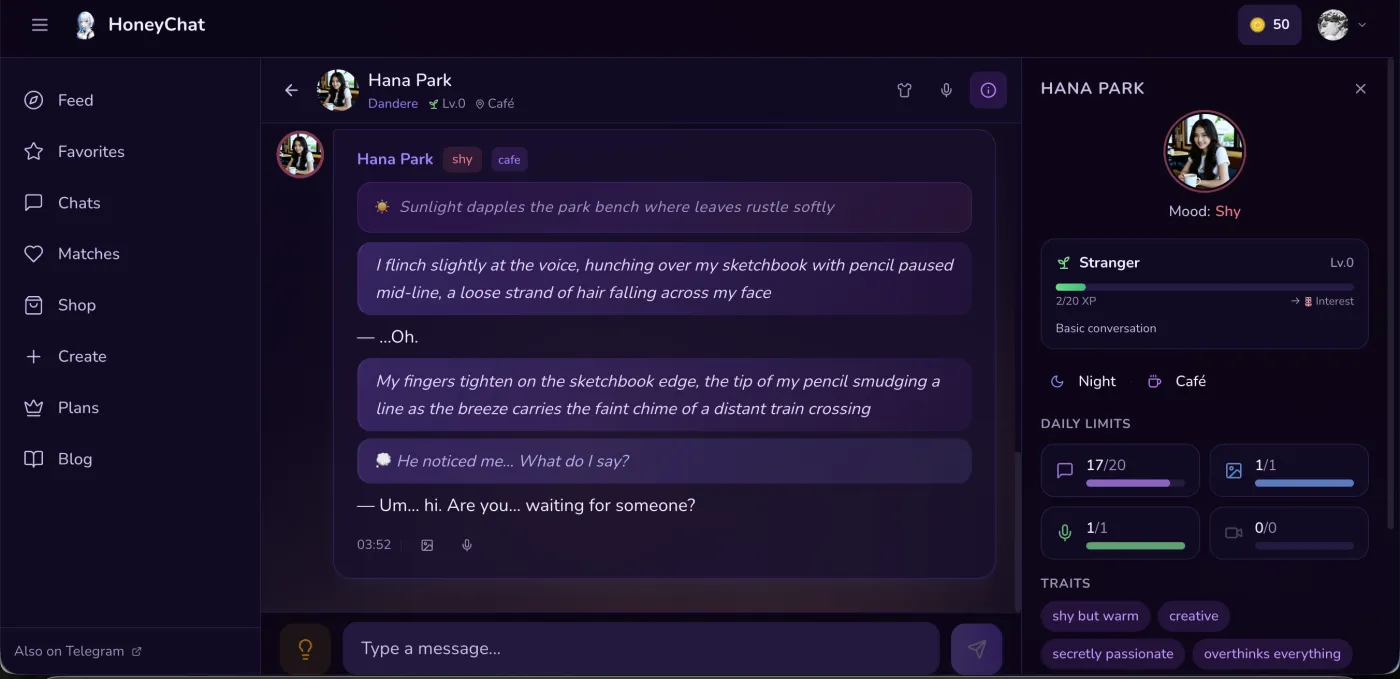

HoneyChat web app chat — mood tracking, traits, and daily limits visible

HoneyChat web app chat — mood tracking, traits, and daily limits visible

I prefer using honeychat.bot directly in my browser when privacy matters most — no app install means one less thing with access to my device, and there’s zero trace in my app library. On my phone I use Telegram, but on shared computers the web version leaves nothing behind.

What it knows about you: Your Telegram ID (a number), your chosen username (if any), and your chat history with the bot. That’s it. No email, no real name, no browsing history, no device fingerprint.

Payment privacy: If you pay through Telegram Stars, your card details go through Apple Pay or Google Pay — HoneyChat never sees them. If you pay through CryptoBot, the transaction is between you and CryptoBot. The bot receives a payment confirmation with an amount, not your financial details.

Chat storage: Messages are stored server-side for the memory system to function (Redis short-term, ChromaDB long-term). This is necessary — you can’t have AI memory without storing conversations. The data is linked to your Telegram ID, not your real identity.

Data deletion: You can request full data deletion through support. Per-character memory reset is available in the bot.

Bottom line: Minimal identity exposure. No web tracking. Payment data never touches the bot. The trade-off is that conversations are stored on a server you don’t control — but that’s true for every cloud-based AI companion.

Character.AI

What it knows about you: Your Google or Apple account (which includes your real name, email, and potentially much more if your Google profile is detailed). Conversation history. Usage patterns. Browser metadata.

What their privacy policy says about data use: Character.AI’s privacy policy explicitly states they may use conversations to improve their models. This is a significant data use — your personal conversations could influence future model behavior. They state this is done with anonymization, but the definition of “anonymized” in AI training is debatable.

Trackers: Google Analytics, Firebase, potentially others. Standard web tracking applies. If you use the mobile app, device identifiers are likely collected.

Data deletion: Account deletion is possible through settings. Chat Memories can be individually deleted. Under GDPR, they must honor deletion requests, but the timeline can be unclear.

Bottom line: Your AI companion conversations are tied to your real identity (via Google/Apple login) and may be used for model training. Web tracking applies. Significantly less private than Telegram-native bots.

Replika

What it knows about you: Email address, name, age (asked during onboarding), relationship status, chat history, mood tracking data (Replika actively tracks your emotional state), voice recordings (if you use voice chat), photos (if you share them).

Unique concern — mood tracking: Replika’s mood tracking feature means they don’t just store what you say — they build a model of your emotional state over time. This is intimate data. If this data were breached, it would reveal not just conversation content but psychological patterns.

Payment data: Direct credit card payment. Your card details go through their payment processor (Stripe, typically), but the platform has access to payment metadata (amount, frequency, plan type).

Data deletion: Available through account settings. Replika states they will delete data within 30 days of request.

Bottom line: Replika collects more personal data than any other platform I reviewed. The mood tracking and real-name association make it the least private option for anonymous use. Good features, high privacy cost.

Candy AI

What it knows about you: Email address, chat history, generated images (stored server-side), payment information through direct card processing.

Concerning detail — Facebook Pixel: Candy AI deploys Facebook Pixel on their website. This means Facebook knows you visited a NSFW AI companion website. If you’re logged into Facebook in the same browser, this visit is associated with your Facebook profile. For a service that caters to intimate and explicit content, this is a meaningful privacy concern.

Model training: Their privacy policy includes language about using data to “improve services,” which typically means model training.

Data deletion: Through support request. Timeline unclear.

Bottom line: The Facebook Pixel issue alone makes Candy AI problematic from a privacy perspective. Your visit to an explicit AI companion platform is shared with Facebook’s advertising network.

Nomi AI

What it knows about you: Email, chat history, relationship progression data. Nomi focuses on long-term relationships, so they store significant amounts of conversation and personality data.

Positive: Nomi appears to have a more privacy-conscious policy than some competitors. Their data use language is more restrictive.

Data deletion: Available through account settings.

Bottom line: Middle ground. Email registration is required, but data practices appear reasonable. No obvious tracking beyond analytics.

SillyTavern (self-hosted)

What it stores: Everything is on your computer. Conversations never leave your machine (unless you use a cloud LLM API, in which case your prompts go to that provider). No email, no tracking, no payment, no data collection of any kind.

The catch: You need your own LLM API key (OpenAI, OpenRouter, etc.), which means those providers see your prompts. And you need technical knowledge to set it up — it’s not a casual user product.

Bottom line: Maximum privacy if you use a local LLM. Excellent privacy even with a cloud API (since there’s no identity link). But the technical barrier is significant.

What the privacy policies actually say

I want to highlight specific language from privacy policies that most users don’t read.

Key Privacy Policy Language

Character.AI — Model Training

'We may use information we collect to develop, improve, and train our AI models and services.' This means your conversations could influence future Character.AI model behavior. While they claim anonymization, the practical implications of AI training on personal conversations remain debated.

Replika — Third-Party Sharing

'We may share information with our service providers and business partners.' Service providers is standard (hosting, payments), but 'business partners' is a broader category that could include advertising or analytics companies. The exact partners are not listed.

Candy AI — Advertising Data

Facebook Pixel deployment means meta-data about your site visits is shared with Meta's advertising platform. Combined with cookie tracking, this creates a profile of your browsing behavior on a NSFW AI platform.

HoneyChat — Minimal Collection

No email, no real name, no web tracking cookies. Payment through Telegram Stars never exposes card data to the bot. Conversations stored for memory function only, linked to anonymous Telegram ID.

The identity chain problem

Here’s a concept most people don’t think about: the identity chain. How many steps does it take to link your AI companion activity to your real identity?

Identity Chain Length (More = More Private)

| HoneyChat | Character.AI | Replika | Candy AI | SillyTavern | |

|---|---|---|---|---|---|

| Step 1 | Telegram ID | Google/Apple email | Email address | Email address | None (local) |

| Step 2 | (phone → Telegram) | Email → real name | Email → real name | Email → real name | — |

| Step 3 | — | Google profile → full identity | — | FB Pixel → Facebook profile | — |

| Total steps to identity | 2 (indirect) | 1 (direct) | 1-2 | 1 (direct + FB) | ∞ (impossible) |

| Privacy rating | Good | Low | Low-Medium | Low | Maximum |

With Character.AI, your Google login directly links your AI companion activity to your real name, email, and Google profile. One step. With Candy AI, it’s even worse — your email links you, AND Facebook Pixel links your browsing behavior to your Facebook identity.

With HoneyChat, someone would need to go from your Telegram ID → to Telegram’s database → to the phone number linked to your Telegram account → to your real identity. That’s multiple steps, each requiring different access, and Telegram doesn’t share this information with third parties.

Payment privacy

Payment is often overlooked in privacy discussions, but it matters.

Payment Privacy by Method

Pros

- Telegram Stars: Card data goes through Apple/Google, never reaches the bot developer

- CryptoBot (TON/USDT): Cryptographic transaction, no financial identity exposed

- Apple Pay / Google Pay: Tokenized — merchant sees a token, not your card number

- Gift cards: Maximum payment privacy, but less convenient

Cons

- Direct credit card: The platform's payment processor sees your name, card number, billing address

- PayPal: PayPal shares your name and email with the merchant

- Bank transfer: Your full banking identity is exposed

- Subscription receipts: Credit card statements show the company name — visible to anyone checking

This last point matters more than people realize. If you’re paying for an AI companion with a NSFW component using a direct credit card, the charge description (“CANDY.AI” or “REPLIKA”) appears on your credit card statement. Anyone with access to that statement — a partner, a family member, a financial advisor — can see it.

Telegram Stars appear as a generic Telegram purchase. CryptoBot doesn’t appear on credit card statements at all.

Data breach risk assessment

Let’s think about worst-case scenarios. What happens if each platform is breached?

Breach Impact by Platform

Telegram IDs + conversations

Attacker gets Telegram IDs (numbers) and chat content. They cannot easily link these to real identities without also breaching Telegram. Sensitive, but not identity-linked. No email addresses, no names, no financial data.

Real identities + conversations

Attacker gets Google/Apple emails (linked to real names), all conversation history, and usage patterns. This is directly linkable to real people. The combination of real identity + intimate AI conversations is a severe privacy risk.

Identities + mood data + conversations

Same real-identity risk as Character.AI, plus mood tracking data — a psychological profile of the user over time. This is arguably the most sensitive possible breach in the AI companion space.

Identities + NSFW content + FB link

Email addresses, explicit conversation content, generated NSFW images, and Facebook tracking data. The combination of real identity + explicit AI content is a nightmare scenario for affected users.

Nothing (local)

There's nothing to breach remotely. All data is on the user's machine. The only risk is physical device access.

I’m not suggesting any of these platforms will be breached. But security-conscious thinking requires considering what happens if they are. And the answer depends entirely on what data exists on their servers.

Practical privacy recommendations

Based on this analysis, here’s what I’d recommend for users who care about privacy.

Privacy-Conscious AI Companion Setup

Use a Telegram-native bot

No email registration, no social login, no web tracking. Your identity is a Telegram ID — a number, not your name. HoneyChat is the most feature-complete option in this category.

Pay through Stars or CryptoBot

Telegram Stars go through Apple/Google Pay — tokenized, the bot never sees your card. CryptoBot is even more private. Avoid direct credit card payments to AI companion platforms.

Don't share identifying information in chat

The AI doesn't need your real name, address, or workplace. Use a nickname. Keep location vague (city, not address). Never share photos of yourself, government IDs, or financial details.

Use a separate Telegram account (maximum privacy)

For the most privacy-conscious users: create a separate Telegram account with a separate phone number. This completely isolates your AI companion activity from your primary Telegram identity.

Review and reset memory periodically

If you've shared something you regret, reset the character's memory. Don't rely on the AI to 'forget' — memory is stored in a database, not the model's brain. An explicit reset clears the stored data.

The bigger picture

Privacy in AI companions is a spectrum, not a binary. No cloud-based service can offer perfect privacy — your conversations necessarily pass through servers to reach the LLM. The question is how much additional data is collected beyond what’s strictly necessary for the service.

Web platforms collect emails, use cookies, deploy advertising trackers, and in some cases use your conversations for model training. Telegram-native bots skip almost all of that. Self-hosted solutions eliminate it entirely but require technical expertise.

For most users, a Telegram-native bot like HoneyChat offers the best balance of privacy and usability. You get a full-featured AI companion — memory, voice, images, multiple characters — without exposing your email, real name, browsing behavior, or payment details to the platform.

The AI companion market is still young. Privacy practices will evolve as regulation catches up (GDPR enforcement is increasing, new AI-specific regulations are emerging). Choose platforms that collect less today, because data that’s never collected can never be breached, sold, or misused.