Last month I did something I’d never done before. I actually read a privacy policy. Not skimmed — read. Word by word.

It was for one of those popular AI companion apps, the kind with millions of downloads and cutesy anime avatars. I’d been using it casually for a few weeks. Figured it was harmless. Then a friend sent me a news article about AI chatbot data leaks, and I thought, “Eh, let me just check what they’re actually collecting.”

Twenty minutes later I was genuinely horrified.

They stored every message. Every single one. They logged my device ID, my IP address, my approximate location. They reserved the right to share “anonymized” conversation data with third-party partners for “research and improvement purposes.” And the best part? Buried on page six, a clause about using conversation content to train future models. My private, personal, sometimes embarrassingly honest conversations — feeding a dataset somewhere.

I deleted the app that night.

The Privacy Problem Nobody Talks About

Here’s the thing about AI companion apps: people use them differently than they use ChatGPT or Google. You’re not asking for recipe ideas. You’re venting about your day. Talking about loneliness. Maybe exploring parts of your personality you don’t show anyone else.

That’s the whole point. These apps market themselves as a safe space.

But most of them are the opposite of safe. They just feel safe because the interface is cute and the AI says nice things. Meanwhile, behind the curtain, they’re hoarding data like it’s Bitcoin in 2021.

I spent three weeks researching the privacy practices of every major AI companion platform I could find. What I discovered was… not great.

Replika requires email signup and stores conversation data on their servers. They went through a whole controversy in 2023 when they suddenly removed romantic features, and users realized they had zero control over their own data or the product direction.

Character.AI requires Google sign-in. Your conversations pass through their servers. They’ve been training models on user conversations. For a platform used heavily by teenagers, the data practices are questionable at best.

Candy AI and Crushon.AI — both require email, both store conversations server-side, both have vague privacy policies about data sharing.

The pattern is the same everywhere: sign up with your real identity, hand over your most personal thoughts, hope they don’t get hacked or acquired or just decide to change their terms of service one Tuesday afternoon.

My Second Privacy Wake-Up Call

About a year ago, a coworker — let’s call him Dave — got a targeted ad on Instagram for couples therapy. Weird, because he’d never searched for anything related to relationships on any social platform. But he had been talking to an AI companion app about problems with his girlfriend.

Coincidence? Maybe. But “maybe” is doing a lot of heavy lifting in that sentence.

Dave couldn’t prove the app sold his data. He couldn’t prove it didn’t, either. That’s the problem with these platforms. The data flows are so opaque that you’re basically trusting a startup’s pinky promise that your intimate conversations aren’t being monetized.

I told Dave about it, and he just shrugged. “What am I gonna do? They all do this.”

Except they don’t. Not all of them.

What Actually Makes an AI Companion Private

Before I get into specific solutions, let’s establish what “private” should actually mean for an AI companion. Because a lot of apps slap a lock icon on their landing page and call it a day.

Real privacy for AI chat means:

No Sign-Up Required

No email, no phone number, no personal info. You shouldn't need to hand over your identity just to have a conversation.

Encrypted Infrastructure

Conversations should be protected by real encryption — not just HTTPS, but platform-level security.

No Data Collection or Sale

Your conversations shouldn't be logged, analyzed, sold, or used to train models without explicit consent.

Conversations Stay Local

Chat history should live in your messaging app — not on some startup's AWS instance.

Anonymous Payment Options

Pay with crypto or platform tokens instead of handing over credit card details.

Real Data Deletion

One command to wipe everything. Not a 30-day 'we'll get around to it' process.

That’s six requirements. Most AI companion apps meet zero of them. Some meet one or two. I only found one that meets all six.

How HoneyChat Does Privacy Differently

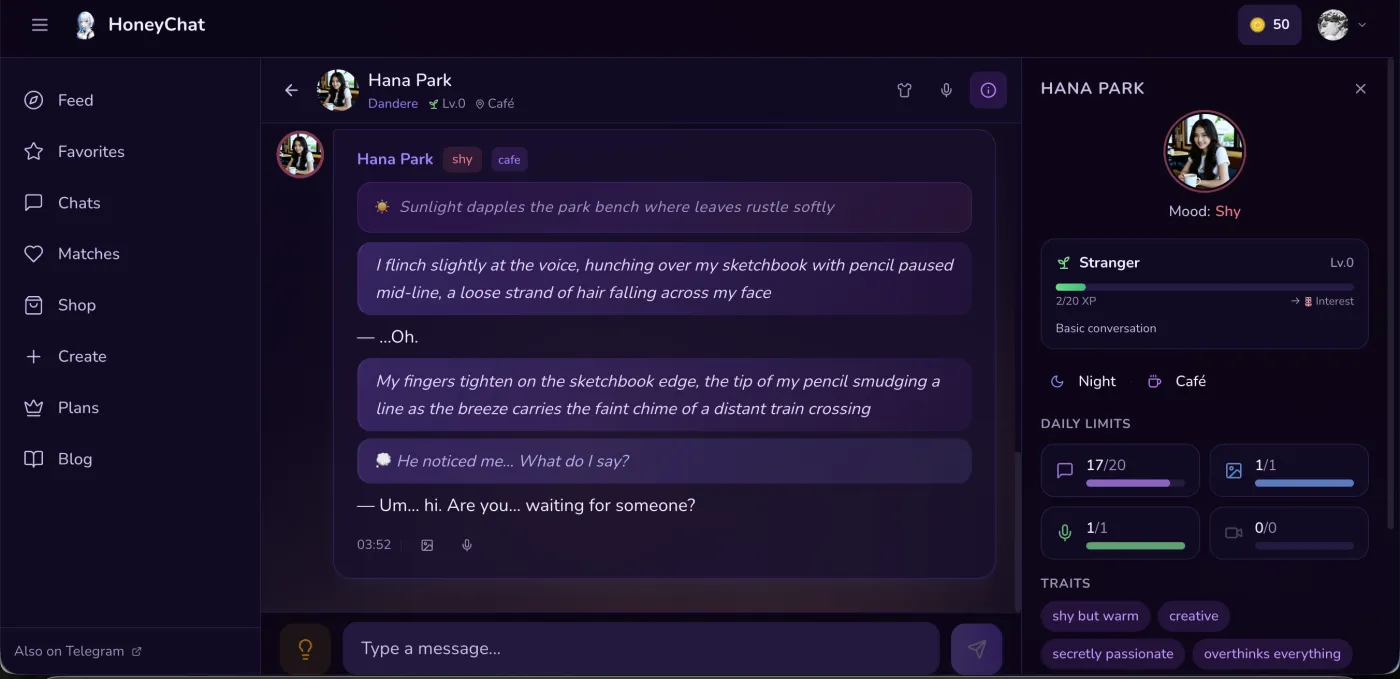

HoneyChat web app chat — mood tracking, traits, and daily limits visible

HoneyChat web app chat — mood tracking, traits, and daily limits visible

For maximum privacy, I open honeychat.bot in a private browser window — no app install, no email, no account, nothing saved on my device when I close the tab. On my phone I use Telegram, which at least keeps things out of the app store entirely.

HoneyChat is a Telegram-based AI companion bot. I stumbled on it while doom-scrolling through AI bot directories after the whole privacy policy incident. At first I was skeptical — I’d been burned before. But the more I looked into it, the more the architecture made sense.

No sign-up. You open Telegram, search for the bot, and start chatting. That’s it. No email. No phone verification (beyond what Telegram itself requires). No profile creation. No “please agree to share your data with our 47 advertising partners.”

Telegram’s encryption. Instead of building their own chat infrastructure (and their own security vulnerabilities), HoneyChat runs inside Telegram. Your messages travel through Telegram’s encrypted pipeline. It’s not a perfect system — Telegram’s encryption has its critics — but it’s a hell of a lot better than most AI companion apps, which basically store your messages in plaintext on a server somewhere.

No data sold. This is the big one. HoneyChat doesn’t collect personal data. They don’t sell conversation logs. They don’t use your chats to train models. The business model is subscriptions, not data harvesting.

Conversations stay in Telegram. Your chat history lives in your Telegram app, not on a separate server. This matters more than people realize. If HoneyChat disappeared tomorrow, your conversations wouldn’t be sitting in some orphaned database waiting to get breached.

Payment privacy. You can pay with Telegram Stars (which process through Apple Pay or Google Pay — no credit card goes to HoneyChat) or cryptocurrency. Neither method exposes your financial details to the bot developer.

The /delete command. Send /delete to the bot and your data is gone. Character preferences, conversation memory, the whole thing. No email to support, no 14-business-day waiting period, no “we’ll retain anonymized data for quality purposes.”

Privacy Comparison: HoneyChat vs Everyone Else

I put together a comparison of how major AI companion platforms handle privacy. The results speak for themselves.

AI Companion Privacy Comparison

| HoneyChat | Replika | Character.AI | Candy AI | Crushon.AI | |

|---|---|---|---|---|---|

| No Email/Phone Required | |||||

| Encrypted Chat | Telegram | TLS only | TLS only | TLS only | TLS only |

| No Data Sold to Third Parties | |||||

| Conversations Stay in Messaging App | |||||

| Anonymous Payment (Card/Stars/Crypto) | |||||

| One-Command Data Deletion | |||||

| No Account = No Data Breach Risk | |||||

| Open About Data Practices | Partial | Partial |

Yeah. It’s that lopsided.

The Third Story: My Friend Who Got Doxxed

I have one more anecdote, and this one’s darker.

A friend of mine — early 20s, lives with conservative parents — was using one of these AI companion apps to explore her identity. Stuff she wasn’t comfortable discussing with people in her life yet. Normal, healthy behavior for someone figuring things out.

The app got breached. Not a massive headline-news breach, just a small one that affected a few thousand users. Her email was in the dump. Some creep cross-referenced the email with her social media, figured out who she was, and sent screenshots of her conversations to her family’s church group.

She’s fine now. But it took months.

That’s the real cost of bad AI chat privacy. It’s not abstract. It’s not “oh no, advertisers know I like anime.” It’s real people having real parts of their lives exposed because some startup couldn’t be bothered to implement proper security — or worse, because they designed their system to collect as much data as possible in the first place.

An AI companion that doesn’t know your email can’t leak your email. An AI companion that doesn’t store your conversations on a separate server can’t have that server breached. This isn’t rocket science. It’s just that most companies have no financial incentive to build things this way.

”But What About the Downsides?”

Look, I’m not going to pretend HoneyChat’s approach is perfect. There are real tradeoffs.

You’re still trusting Telegram. HoneyChat inherits Telegram’s security, which means you’re also inheriting Telegram’s weaknesses. Telegram’s standard chats use server-client encryption, not end-to-end. Telegram has faced questions about its ties to various governments. If you’re a journalist or activist in a hostile country, Telegram-based anything might not be sufficient. For most people chatting with an AI companion? It’s way better than the alternatives. But it’s not Signal-level privacy.

The /delete command is trust-based. When you hit /delete, you’re trusting that HoneyChat actually deletes everything. There’s no independent audit confirming this. It’s the same kind of trust issue every cloud service has, just on a smaller scale.

Free tier has limits. 20 messages per day on the free plan. If you want unlimited messaging and the full feature set (voice, photos, video), you’ll need a subscription. Privacy shouldn’t be a luxury, and the core privacy features apply to all tiers — but the full experience isn’t free.

It’s still AI. Your messages are processed by language models. Those models run on servers. There’s a processing pipeline involved. “Conversations stay in Telegram” is somewhat simplified — the AI has to read your messages to respond. The key difference is that HoneyChat doesn’t store or sell that data afterward.

I think being upfront about these things is more trustworthy than pretending everything is perfect. Every system has tradeoffs. The question is whether the tradeoffs are reasonable.

On the privacy topic specifically, Secret Desires AI is another platform worth knowing about — they use a Hearts currency system with opaque pricing, which is a different kind of privacy tradeoff. Their feature set is interesting but the lack of transparency is its own red flag.

Pricing (All Tiers Keep Core Privacy Features)

One thing I appreciate: the privacy features aren’t locked behind a paywall. Free users get the same no-signup, no-data-collection, anonymous experience. Paid tiers add more messages, photos, voice, and video — not more privacy.

Free

- 20 msg/day

- 1 images/day

- 1 voice/day

- 0 videos/mo

- 1 characters

Basic

- 60 msg/day

- 10 images/day

- 10 voice/day

- 3 videos/mo

- 2 characters

Premium

- Unlimited messages

- 30 images/day

- 20 voice/day

- 8 videos/mo

- 3 characters

VIP

- Unlimited messages

- 80 images/day

- 50 voice/day

- 15 videos/mo

- 5 characters

Elite

- Unlimited messages

- 150 images/day

- 100 voice/day

- 25 videos/mo

- Unlimited characters

You can pay with card, Telegram Stars, or cryptocurrency. No credit card required by HoneyChat at any tier.

How to Set Up a Private AI Companion (2 Minutes)

This is embarrassingly simple, which is kind of the point.

- Open Telegram. If you don’t have it, download it. Available on iOS, Android, desktop, web.

- Search for HoneyChat or tap the link below.

- Press Start. That’s it. You’re chatting. No form to fill out, no verification email to find in your spam folder, no “please upload a profile photo.”

- Pick a character. Browse the collection or describe what you want.

- Chat. Your conversation lives in Telegram. If you ever want out, send

/delete.

The whole process takes less time than reading a privacy policy. Which, let’s be honest, is how most people end up in trouble — they don’t read the privacy policy, and the AI app banks on that.

Why This Matters More in 2026

We’re in an era where AI companions are going mainstream. Downloads are up 340% year-over-year. Major tech companies are building their own versions. The market is projected to hit $5 billion by 2028.

And most of these products are built on the same model: collect as much data as possible, monetize it later, figure out the privacy implications when (not if) something goes wrong.

The EU AI Act is starting to catch up. California’s new AI transparency law kicks in later this year. But regulation moves slowly, and enforcement moves even slower. Right now, the best protection is choosing tools that are built for privacy from the ground up, not tools that retroactively patch privacy onto a data-hungry architecture.

HoneyChat isn’t the only privacy-focused AI tool out there. But in the AI companion space specifically, I haven’t found another option that checks this many boxes. No signup, encrypted infrastructure, anonymous payments, instant deletion. It’s what this category should look like.

Final Thoughts

I started this article with a story about reading a privacy policy. Most people never do that. Most people trust the cute interface and the friendly onboarding and the “we take your privacy seriously” banner that every company slaps on their website.

But AI companions are different from other apps. You’re sharing real thoughts, real emotions, sometimes real vulnerabilities. The privacy stakes are higher here than almost anywhere else in tech.

If you’re going to talk to an AI, at least make sure the AI doesn’t know who you are.