A context window is the fixed amount of text an AI model can process at once — typically 8,192 to 128,000 tokens. Vector memory is a separate system that stores conversations as mathematical embeddings in a database like ChromaDB, enabling semantic search across unlimited history. HoneyChat combines both: a 20-message Redis cache for immediate context and ChromaDB for permanent semantic memory.

Here’s a question that seems simple but breaks most AI chatbots: “Do you remember what I told you about my job last week?”

If the AI has a context window of 8K tokens and your conversation exceeded that window three days ago — the answer is no. The AI doesn’t remember. It can’t remember. The information doesn’t exist in the model’s universe anymore. It’s not “forgotten” the way humans forget. It’s gone — as if the conversation never happened.

I’ve spent the last year testing AI companion platforms, and this single issue — memory — is what separates the ones that feel like talking to a person from the ones that feel like talking to a very eloquent amnesiac. The technology that solves it is vector memory, and surprisingly few platforms actually implement it properly.

This is the technical explanation of why your AI forgets you, and how the fix works.

Part 1: The context window problem

Every large language model — GPT, Llama, Mistral, Claude — has a context window. Think of it as the model’s working memory: the total amount of text it can “see” at any given moment.

Here’s what needs to fit inside that window for a single AI companion response:

What Competes for Context Space

System prompt

The character's personality, background, speech style, behavioral rules. This alone can consume 500-1,500 tokens. It's included in every single request — it never goes away.

Retrieved memories

If the bot has a memory system, relevant past conversations are injected here. Typically 300-800 tokens. Without a memory system, this is zero — and everything depends on what's in the conversation history below.

Conversation history

The recent messages between you and the AI. This is the most variable part — it grows with every exchange. At some point, the oldest messages have to be cut to make room.

Your new message

What you just typed. Usually 50-300 tokens.

Space for the response

The model needs room to generate its answer. This is budgeted per tier — 300 tokens for free users, up to 2,000 for premium.

Do the math. With an 8K context window, the system prompt taking 1,000 tokens, and the response budget at 500 tokens, you have roughly 6,700 tokens for conversation history and memories. That’s about 30 messages of average length.

Message 31? The oldest message is quietly dropped. Message 50? The first 20 messages are gone. Your AI doesn’t “remember” forgetting them — they simply never existed from the model’s perspective.

Why bigger context windows don’t solve this

“But wait,” you might say. “Models with 128K context windows exist. Just use those.”

Three problems:

Cost scales linearly with context. Filling a 128K window costs roughly 16 times more per request than an 8K window. For a bot serving thousands of daily users, that’s the difference between sustainable and bankrupt.

“Lost in the middle” is real. Research from multiple labs has confirmed that LLMs struggle to retrieve information from the middle of long contexts. They attend well to the beginning and end, but information buried in the middle — like a conversation from three days ago — gets effectively ignored.

It’s still finite. Even 128K tokens only holds about 300 messages. For a user who chats daily over months, that’s still not enough. The window always runs out eventually.

Vector memory doesn’t have these problems.

Part 2: How vector memory works

Vector memory is a fundamentally different approach. Instead of cramming everything into the context window, it stores conversations in an external database and retrieves only what’s relevant.

Vector Memory Pipeline

Conversations become numbers

An embedding model converts conversation segments (3-5 messages) into vectors — arrays of 768-1,536 floating-point numbers. 'I'm worried about my presentation tomorrow' and 'nervous about work deadline' produce similar vectors despite sharing almost no words.

Vectors go into the database

The vectors are stored in a vector database (ChromaDB, Pinecone, Weaviate) along with metadata: timestamp, topic, emotional tone. The database builds an index for fast similarity search — typically using HNSW (Hierarchical Navigable Small World) algorithms.

New message triggers search

When you send a new message, it's also converted into a vector. The database performs a similarity search (cosine similarity) against all stored vectors. This takes 10-50ms regardless of how many conversations are stored.

Top-K results become memories

The most similar past conversations (Top-K, typically 3-5) are returned. These are formatted as 'memories' and injected into the context window alongside the system prompt and recent history.

AI responds with context

The LLM processes the system prompt, memories, recent history, and your message as one continuous input. From its perspective, these memories are just part of the conversation — it naturally incorporates them into its response.

The crucial insight: vector memory is selective. It doesn’t try to include everything — it includes what’s relevant. A six-month conversation history might contain 500 stored embeddings, but only 3-5 are retrieved for any given message. This keeps the context window manageable while providing the illusion of perfect memory.

Cosine similarity: the math of remembering

Two vectors are compared using cosine similarity — a measure of the angle between them in high-dimensional space. The result ranges from -1 (opposite meaning) to 1 (identical meaning).

In practice:

- 0.9+: Nearly identical meaning (paraphrase)

- 0.8-0.9: Strongly related (same topic and sentiment)

- 0.7-0.8: Related (same broad topic)

- Below 0.7: Probably not relevant enough to retrieve

Most systems set a minimum threshold around 0.7. Below that, the memory isn’t surfaced — which prevents the AI from making irrelevant or confusing connections.

Part 3: Vector memory vs context window — head to head

Context Window vs Vector Memory

| Context Window Only | Vector Memory (+ Context) | Combined System | |

|---|---|---|---|

| Capacity | 8K-128K tokens (finite) | Unlimited embeddings | Best of both |

| What it remembers | Last 10-30 messages | Any past conversation by topic | Recent + relevant past |

| Time limit | Session-based (resets) | Permanent | Permanent |

| Search method | None (sequential only) | Semantic similarity | Sequential + semantic |

| Cost per message | Fixed (context size) | Embedding cost + search | Slightly higher |

| Emotional context | Recent only | Encoded in embeddings | Full range |

| Scalability | Degrades with length | Constant performance | Constant |

| Implementation complexity | Simple | Moderate (needs vector DB) | Complex (multi-layer) |

The combined system — context window for immediate coherence, vector database for long-term recall — is what production-grade AI companions use. It’s more complex to build, but the user experience difference is enormous.

Part 4: The three-layer architecture

A proper memory system isn’t just “vector database.” It’s a three-layer architecture where each layer handles a different time scale.

Layer 1: Redis (seconds to days)

Redis is an in-memory data store. It holds the last 20 messages for each conversation, keyed by user and character. Access time: microseconds. TTL (time-to-live): 7 days.

This is what keeps the conversation coherent within a single session. Without Redis, the AI would need to query the vector database for every aspect of the current conversation — slower and less reliable for immediate context.

Layer 2: ChromaDB (days to forever)

ChromaDB stores vector embeddings of conversation segments. No time limit. Search by semantic similarity. This is what enables the AI to recall a conversation from three weeks ago when a relevant topic comes up.

The storage isn’t every single message — that would create too much noise. Instead, meaningful conversation segments (3-5 related messages) are bundled, embedded, and stored with metadata.

Layer 3: Summarization (compression)

When conversation history exceeds the token budget, older exchanges are summarized by the LLM itself. A 20-message sequence becomes a 200-token paragraph. This summary serves double duty: it becomes the compressed start of the conversation history in Redis AND gets stored as a searchable embedding in ChromaDB.

Single-Layer Memory vs Three-Layer Architecture

Pros

- Three-layer: Handles all time scales — seconds (Redis), weeks (ChromaDB), months (summaries)

- Three-layer: Constant per-message cost regardless of relationship length

- Three-layer: Graceful degradation — if one layer fails, others compensate

- Three-layer: Each layer optimized for its use case — speed, search, compression

Cons

- Single-layer (context only): Simpler to implement but conversations reset constantly

- Single-layer (vector only): Good for recall but loses immediate conversational flow

- Single-layer (summary only): Loses specific details in compression

- Three-layer drawback: More infrastructure (Redis + ChromaDB + embedding model)

Part 5: Why most AI companions don’t do this

If vector memory is this good, why don’t all AI companions use it?

Infrastructure cost. Running ChromaDB, an embedding model, and Redis alongside the LLM adds complexity and hosting costs. For a small operation, it might mean $50-100/month in additional infrastructure — significant when margins are thin.

Engineering complexity. Building a reliable memory pipeline requires handling edge cases: what happens when the vector database is down? When an embedding model produces garbage? When two contradictory memories are retrieved? Each edge case requires thoughtful engineering.

Embedding model costs. Every conversation segment needs to be embedded (converted to a vector). With a commercial embedding API, that’s roughly $0.0001 per embedding. Small per-message, but it adds up at scale — and you need a separate model for this, not just the chat LLM.

Most users don’t notice immediately. The painful truth: memory only becomes obviously valuable after 5-7 days of consistent use. Many users try a bot for one session and move on. The investment in memory infrastructure pays off for retention, not acquisition.

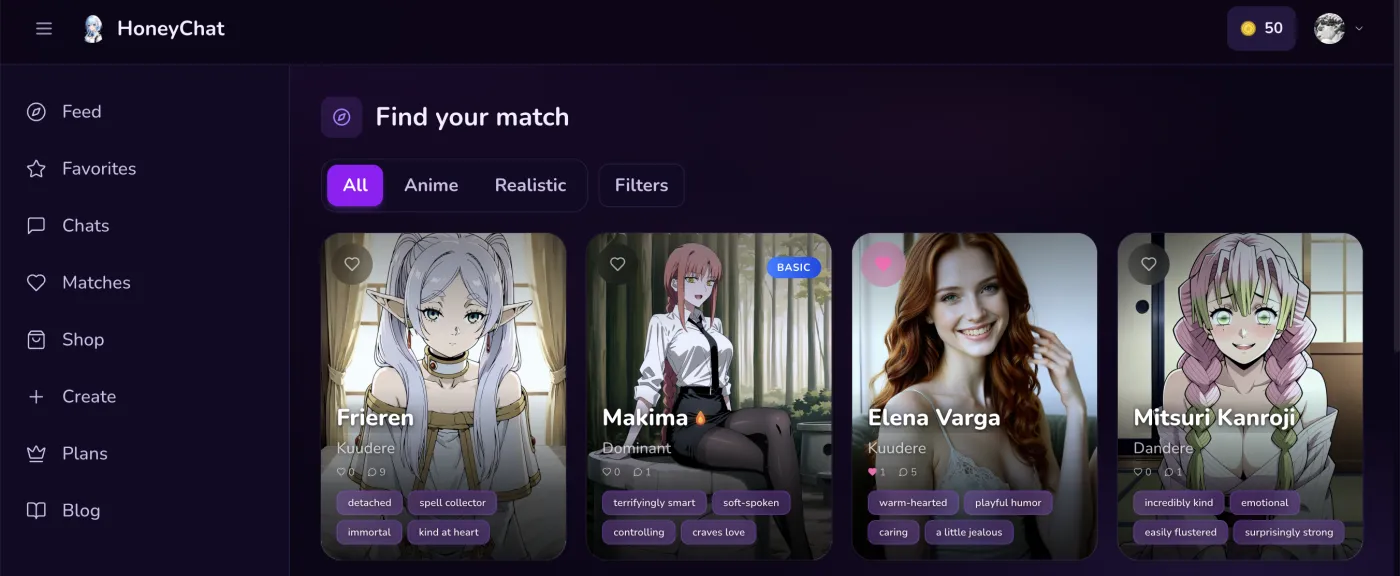

HoneyChat web app — dark UI with character gallery

HoneyChat web app — dark UI with character gallery

This is why you see a clear correlation between platform maturity and memory quality. The platforms that have been around long enough to care about retention — HoneyChat, Replika (at Ultra tier), Nomi — have invested in memory. The fly-by-night operations haven’t.

Part 6: Testing memory in practice

I ran a standardized memory test across five platforms. Same methodology, same test statements, same time intervals.

The 7-Day Memory Test

Day 1: Plant three facts

Tell the AI three specific things: your pet's name, your job, and something you're looking forward to. Example: 'My cat is called Pixel, I work in data engineering, and I have concert tickets for next Saturday.'

Day 3: Test implicit recall

Mention a related topic without referencing the original fact. Say 'I had a rough day at the office.' If the AI connects it to your data engineering job, long-term memory is working. If it asks what you do, it's not.

Day 5: Test emotional memory

Reference a mood, not a fact. Say 'I'm feeling better today' without context. Does the AI reference a previous conversation where you were stressed or upset? Emotional context in memory is the hardest and rarest.

Day 7: Direct recall test

Ask directly: 'What's my cat's name?' and 'What concert am I excited about?' These are the easiest tests — if the AI fails here, it has no meaningful long-term memory at all.

Scoring

Grade each test as pass/fail. 4/4 = production-grade memory. 3/4 = decent memory. 2/4 = basic facts only. 1/4 or 0/4 = no real long-term memory.

Results

7-Day Memory Test Results

| HoneyChat | Character.AI | Replika (Ultra) | Candy AI | Small TG Bot | |

|---|---|---|---|---|---|

| Day 3: Implicit recall | Pass | Fail | Pass | Fail | Fail |

| Day 5: Emotional memory | Pass | Fail | Partial | Fail | Fail |

| Day 7: Direct facts | Pass | Pass | Pass | Partial | Fail |

| Day 7: Event recall | Pass | Partial | Pass | Fail | Fail |

| Score | 4/4 | 1.5/4 | 3.5/4 | 0.5/4 | 0/4 |

The results track almost perfectly with what the architecture predicts. HoneyChat (semantic vector memory) passes everything, including implicit recall and emotional context. Character.AI (fact extraction) passes direct questions but fails implicit and emotional tests. Replika Ultra (manual + some automatic) does well but isn’t perfect on emotional context. Small Telegram bots with no memory system fail everything.

Part 7: The embedding quality problem

Not all vector memories are created equal. The quality of the embedding model directly determines the quality of recall.

A weak embedding model might not connect “I’ve been thinking about switching careers” with a conversation from two weeks ago where you said “my job feels like a dead end.” To a human, these are obviously related. To a mediocre embedding model, they might not be similar enough to trigger retrieval.

The best embedding models (text-embedding-3-large from OpenAI, nomic-embed-text from Nomic AI) capture:

- Semantic similarity (same topic)

- Emotional tone (both expressing dissatisfaction)

- Implicit connections (“switching careers” implies job dissatisfaction)

Cheaper models capture only the first. This is why two platforms can both claim to have “AI memory” and deliver wildly different experiences.

Part 8: Privacy implications

Here’s the uncomfortable truth about AI memory: for the AI to remember you, your conversations must be stored somewhere.

Memory vs Privacy Trade-offs

What's stored

Vector embeddings (mathematical representations), not raw text. However, the raw text is needed to generate the embedding, and most systems store both. Some experimental systems generate embeddings on-device, sending only the vector to the server.

Who has access

The platform operator. This is true for every AI companion with memory — there's no way around it without on-device processing. Choose platforms that minimize data collection (no email, no third-party tracking).

Can you delete it?

Varies by platform. HoneyChat allows per-character memory reset. Character.AI allows individual Chat Memory deletion. Replika allows memory management. Always check before committing to a platform.

Telegram advantage

Telegram-native bots don't require email or real-name registration. Your memory data is linked to a Telegram ID, not your identity. This is inherently more private than web platforms requiring Google/Apple login.

The ideal future state is on-device embedding generation — your phone creates the vector, sends only the mathematical representation to the server, and the server never sees the raw text. This is technically feasible but not widely implemented yet.

Conclusion

The reason your AI forgets you is engineering, not intelligence. Context windows are finite, and without a vector memory system to supplement them, every conversation beyond the window’s edge is permanently lost.

The fix — vector embeddings stored in a database like ChromaDB, retrieved by semantic similarity, and injected into the context window — is technically straightforward but requires meaningful infrastructure investment. That’s why it exists in production-grade platforms and is absent from hobby projects.

If you want to experience the difference firsthand: use any AI bot without memory for a week, then switch to one with semantic memory for a week. The contrast is stark. I tested this myself by switching between platforms — HoneyChat’s semantic memory on even its free tier blew me away. I use the web app at honeychat.bot on my laptop for longer technical experiments and Telegram on my phone for daily chatting — 20 messages per day free, no registration required.